Introduction to load balancing

Load balancing plays a vital role in distributing network traffic efficiently across multiple servers or resources. This article provides a comprehensive overview of load balancers, including their theory, design considerations, persistence types, and load balancing methods. Furthermore, it presents a detailed list of commercial and open source load balancer solutions. Load balancers are crucial components in modern network architectures, ensuring high availability, scalability, and optimal performance of services. Understanding the theory, design considerations, and available load balancer systems empowers organizations to make informed decisions when implementing load balancing solutions. This article provides a discussion on Load balancing solutions and design considerations.

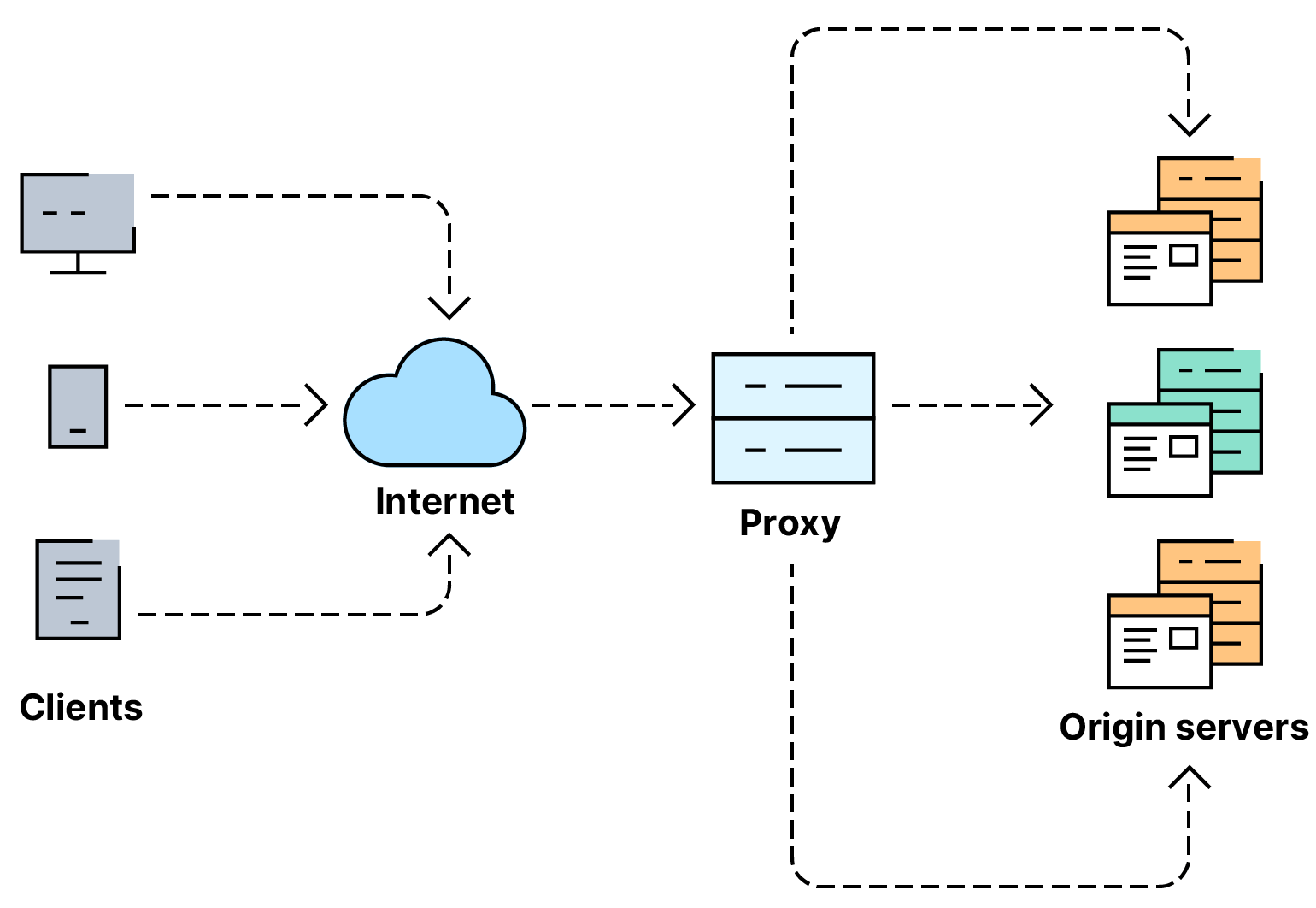

In modern computer networks, load balancers are employed to optimize resource utilization, enhance performance, and ensure high availability of services. They act as intermediaries between client requests and the backend servers, distributing incoming traffic in an efficient and scalable manner.

Load balancing features

Load balancers are designed to handle different types of traffic and distribute it across multiple servers or resources. The key objectives of load balancers include:

- High Availability: Load balancers ensure that services remain available by distributing the traffic among multiple servers. If one server fails, the load balancer redirects traffic to healthy servers.

- Scalability: Load balancers allow for horizontal scaling by seamlessly adding or removing servers from the pool based on traffic demands.

- Performance Optimization: Load balancers intelligently distribute traffic to ensure optimal utilization of resources, reducing response times and improving overall performance.

Load Balancer Design Considerations

When designing a load balancer, several factors should be considered.

Persistence types

Load balancers can maintain different types of persistence, such as the following.

- Source IP Affinity: Requests from the same IP are directed to the same server.

- Cookie-Based Persistence: A cookie is set upon the initial request, ensuring subsequent requests from the same client are directed to the same server.

- SSL Session Persistence: SSL/TLS sessions are maintained and assigned to the same server for the duration of the session.

Load Balancing Methods

Load balancers employ various algorithms to distribute traffic. Some common methods include the following:

- Round Robin: Requests are distributed evenly across servers in sequential order. The Round Robin method evenly distributes incoming requests among the available servers in sequential order. The load balancer maintains a list of servers and forwards each request to the next server in the list. This technique ensures that each server receives an equal share of the traffic.

- Least Connections: Traffic is directed to the server with the fewest active connections. In the Least Connections method, the load balancer directs the incoming request to the server with the fewest active connections. This technique ensures that traffic is evenly distributed based on the server's current workload. Servers with fewer connections are assigned more requests, balancing the load across the server pool.

- IP Hash: The client's IP address is used to determine which server receives the request. The IP Hash method uses the client's IP address to determine the destination server. The load balancer computes a hash value based on the client's IP address and maps it to one of the available servers. This ensures that requests from the same client IP are consistently routed to the same server, enabling session persistence and maintaining session-related data.

- Weighted Round Robin: Servers are assigned weights, distributing traffic proportionally based on those weights. Weighted Round Robin assigns different weights to servers based on their capacity or performance. Servers with higher weights receive a larger proportion of the traffic. This technique allows administrators to allocate more resources to powerful servers, ensuring they handle a greater share of the workload.

- Least Response Time: Traffic is directed to the server with the fastest response time. The Least Response Time method measures the response times of each server and directs incoming requests to the server with the fastest response time. Load balancers periodically monitor the servers' response times and adjust traffic distribution accordingly. This technique ensures that requests are routed to the most responsive server, optimizing overall performance.

- Source IP Affinity (Sticky Sessions): Source IP Affinity, also known as Sticky Sessions, maintains session persistence based on the client's IP address. Once a client establishes a connection with a server, subsequent requests from the same IP address are directed to the same server. This technique ensures that session-related data is consistently handled by a specific server, maintaining session state.

- Content-Based Routing: Content-Based Routing involves analyzing the content or characteristics of the incoming request to determine the appropriate server. Load balancers inspect request headers, URLs, or other attributes to make routing decisions. This technique is useful for scenarios where specific types of requests need to be directed to specialized servers based on their capabilities.

- SSL/TLS Offloading: SSL/TLS Offloading, also known as SSL termination, involves the load balancer handling the SSL/TLS encryption and decryption processes. The load balancer receives encrypted requests from clients, decrypts them, and forwards the decrypted requests to the backend servers. This offloads the resource-intensive SSL/TLS operations from the servers, improving their performance and scalability.

- DNS Load Balancing: DNS Load Balancing utilizes DNS to distribute incoming requests among multiple servers. The load balancer responds to DNS queries with multiple IP addresses, rotating the order of the addresses to distribute the traffic. Clients select one of the IP addresses returned by the DNS query and establish a connection directly with the chosen server.

Commercial and Open Source Load Balancer Systems

Below is a list of twenty load balancer systems, including ten open-source solutions:

Commercial Load Balancer Systems

- F5 Networks - https://www.f5.com/

- Citrix Netscaler ADC - https://www.citrix.com/

- Azure load balancing services. For a full list and comparison of the various Azure load balancing services, consult the following article: https://stefanos.cloud/azure-load-balancing-service-options/.

- A10 Networks - https://www.a10networks.com/

- Barracuda Networks - https://www.barracuda.com/

- Radware - https://www.radware.com/

- Kemp Technologies - https://kemptechnologies.com/

- Avi Networks - https://avinetworks.com/

- NGINX (now owned by F5) - https://www.nginx.com/

- HAProxy Technologies - https://www.haproxy.com/

- Amazon Web Services (AWS) Elastic Load Balancer - https://aws.amazon.com/elasticloadbalancing/

- Windows Network Load Balancing (NLB) is a built-in load balancing feature in Microsoft Windows Server operating systems. NLB allows multiple servers to work together to distribute incoming network traffic across a cluster of servers, improving performance, scalability, and high availability of applications and services.

- HTTP Load Balancing using Microsoft IIS Application Request Routing (ARR) allows distributing incoming HTTP and HTTPS traffic across multiple backend servers or applications. ARR acts as a reverse proxy server, receiving client requests and forwarding them to the appropriate backend server based on configured routing rules.

Open-Source Load Balancer Systems

- HAProxy - http://www.haproxy.org/

- Nginx - https://nginx.org/

- NGINX Plus (Commercial version of NGINX)

- Apache HTTP Server - https://httpd.apache.org/

- Envoy Proxy - https://www.envoyproxy.io

- Traefik - https://traefik.io/

- OpenResty - https://openresty.org/

- Caddy - https://caddyserver.com/

- Pound - http://www.apsis.ch/pound/

- Vulcand - https://vulcand.github.io/