Case #

You have two or more physical Hyper-V hosts on which you need to configure a Hyper-V cluster to be able to host virtual machines. This article provides step-by-step guidance on how to deploy a Hyper-V cluster with Powershell. This article is work in progress.

If you need to gracefully delete a Windows Failover Cluster (WFC), review the following KB article: https://stefanos.cloud/kb/how-to-delete-a-windows-failover-cluster-via-powershell/.

The final outcome of this article will be a fully polished Powershell script to be used for efficiently deploying a Hyper-V cluster (coming soon).

Solution #

Pre-requisites #

First off, you should have configured the core/distribution switching infrastructure and the storage infrastructure for supporting your Hyper-V cluster. Designing the proper networking and storage environment for any Windows Failover Cluster (WFC) is outside the scope of this article. You can find out more about design considerations and best practices in my Windows Failover Clustering Design Handbook.

Each Hyper-V host which will join the WFC Hyper-V cluster will need to have sufficient RAM memory, sufficient storage IOPS in its local disks and sufficient number of physical Network Interface Cards (NICs). You will need to ensure redundancy for the shared storage cabling paths with LACP configured at the switch ports side.

Ensure that all your Hyper-V hosts have the latest Windows updates and the latest hardware vendor (for example pNICs and hard disks) drivers and firmware installed.

Remember that when setting up a single (standalone) Hyper-V server, most of the High Availability features of a WFC are not applicable, such as Live Migration, Quick Migration and Storage Multipathing.

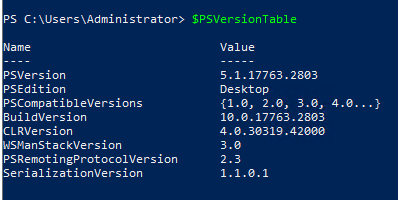

Ensure that you have the latest Powershell version installed on all Hyper-V hosts. Powershell Desktop can be run on Windows only while Powershell Core can be run on any supported operating system, including MacOSX and Linux. Powershell Desktop latest version is 5.1 and no new versions will be coming out.

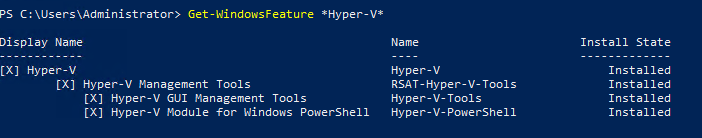

Powershell must have the Hyper-V module imported. The module should already be installed as part of the Hyper-V management tools.

Important note

The below Powershell cmdlets must be executed in an elevated Powershell terminal on each Hyper-V physical host. Please use these cmdlets at your own risk as they have been created by taking into account a certain environment/configuration. There may need to be changes in your own environment. First test in a lab environment before applying to production. Obviously all variables and environment-specific elements must be changed to reflect your own environment.

Install server roles and features #

Run the following Powershell cmdlets to install the required server roles and features on each Hyper-V host.

Import-Module ServerManager

#Assuming multipath will be enabled in your shared storage, for example iSCSI

Add-WindowsFeature Multipath-IO

Install-WindowsFeature -Name Failover-Clustering -IncludeManagementTools

Install-WindowsFeature -Name Hyper-V -IncludeManagementTools

Install-WindowsFeature Hyper-V-PowershellPowershell configuration cmdlets #

Run the following cmdlets for configuring IP addressing of the Hyper-V hosts' physical NICs.

#The below two commands must be run manually on each HyperV host for configuring an example storage vendor iSCSI storage. Refer to the storage vendor documentation for further instructions.

#mpiocpl.exe -> add support for ISCSI devices

#mpclaim –s –d

#Install storage vendor iSCSI driver on the Hyper-V host

# Adjust subnetting as per your requirements. The below configuration is only an example.

# The below example assumes four (4) NICs per physical host to be dedicated to shared storage traffic and other four (4) NICs per physical host to be dedicated to cluster traffic.

#NIC1, NIC3, NIC5, NIC7 are used for shared storage traffic

#NIC2, NIC4, NIC6, NIC8 are used for WFC cluster traffic

#Hyper-V Host 1

New-NetIPAddress -InterfaceAlias "NIC1" -IPAddress 192.168.10.11 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC3" -IPAddress 192.168.11.11 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC5" -IPAddress 192.168.12.11 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC7" -IPAddress 192.168.13.11 -PrefixLength 24

#Hyper-V Host 2

New-NetIPAddress -InterfaceAlias "NIC1" -IPAddress 192.168.10.12 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC3" -IPAddress 192.168.11.12 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC5" -IPAddress 192.168.12.12 -PrefixLength 24

New-NetIPAddress -InterfaceAlias "NIC7" -IPAddress 192.168.13.12 -PrefixLength 24

#Configure the iSCSI initiator on Hyper-V host 1, apply same to other Hyper-V hosts

New-IscsiTargetPortal –TargetPortalAddress <Shared_Storage_IP_address_1>

New-IscsiTargetPortal –TargetPortalAddress <Shared_Storage_IP_address_2>

New-IscsiTargetPortal –TargetPortalAddress <Shared_Storage_IP_address_3>

New-IscsiTargetPortal –TargetPortalAddress <Shared_Storage_IP_address_4>

$targets = Get-IscsiDiscoveredTarget

Connect-IscsiDiscoveredTarget –NodeAddress $targets[0].NodeAddress

Connect-IscsiDiscoveredTarget –NodeAddress $targets[1].NodeAddress

Connect-IscsiDiscoveredTarget –NodeAddress $targets[2].NodeAddress

Connect-IscsiDiscoveredTarget –NodeAddress $targets[3].NodeAddress

#If the Connect-IscsiDiscoveredTarget does not work, then use

# Register-IscsiPersistentTarget –TargetName $targets[0].NodeAddress, etc.

Get-IscsiPersistentTarget

#Configure MPIO using the Windows Server configuration tool

# Also refer to the following article for more details on the Windows Server MPIO feature configuration: https://docs.microsoft.com/en-us/powershell/module/mpio/?view=windowsserver2022-ps. #Create a NIC team for the NICs on each Hyper-V host to support the WFC cluster and client

#Adjust the TeamingMode and LoadBalancingAlgorithm parameters as per your environment requirements

New-NetLbfoTeam -Name "HyperVTeam01" -TeamMembers "NIC2", "NIC4", "NIC6", "NIC8" -TeamingMode LACP -LoadBalancingAlgorithm TransportPorts

#Create a Hyper-V vSwitch object and bind it to the NIC team

New-VMSwitch -Name "HyperVSwitch01" -AllowManagementOS 0 -MinimumBandwidthMode Weight -NetAdapterName "HyperVTeam01"

#Or if you prefer to use SET as a SDN alternative to traditional NIC teaming, you can run below cmdlet.

New-VMSwitch -Name "SETTeam01" -NetAdapterName "NIC2", "NIC4", "NIC6", "NIC8" -EnableEmbeddedTeaming $true

#Configure pNICs with Jumbo frames, check pNIC manufacturer for Jumbo frame optimals and other possible optimized configuration values Get-NetAdapterAdvancedProperty -Name "NIC*" -DisplayName "Jumbo Frame" | Set-NetAdapterAdvancedProperty -RegistryValue "9216" Get-NetAdapterAdvancedProperty -Name "NIC*" -DisplayName "Jumbo Mtu" | Set-NetAdapterAdvancedProperty -RegistryValue "9000" Get-NetAdapterAdvancedProperty -Name "NIC*" #Configure vNICs, Three virtual networks should be created at minimum, namely Management, Cluster and LiveMigration Add-VMNetworkAdapter –ManagementOS –Name "Management" –SwitchName "HyperVSwitch01" Add-VMNetworkAdapter -ManagementOS -Name "Cluster" -SwitchName "HyperVSwitch01" Add-VMNetworkAdapter -ManagementOS -Name "LiveMigration" -SwitchName "HyperVSwitch01" #Configure VLANs in the vNICs, example values provided for VLAN IDs Set-VMNetworkAdapterVlan –ManagementOS –VMNetWorkAdapterName "Management" -Access –VlanId 20 Set-VMNetworkAdapterVlan –ManagementOS –VMNetWorkAdapterName "Cluster" -Access –VlanId 21 Set-VMNetworkAdapterVlan –ManagementOS –VMNetWorkAdapterName "LiveMigration" -Access –VlanId 22 #Configure IP addressing of the vNICs and set DNS servers, set as per your network subnetting design, the below are only samples New-NetIPAddress -InterfaceAlias "vEthernet (Management)" -IPAddress 10.0.1.21 -DefaultGateway 10.0.0.1 -PrefixLength 24 Set-DnsClientServerAddress -InterfaceAlias "vEthernet (Management)" -ServerAddresses 10.0.0.2, 10.0.0.3 New-NetIPAddress -InterfaceAlias "vEthernet (Cluster)" -IPAddress 10.0.2.21 -PrefixLength 24 New-NetIPAddress -InterfaceAlias "vEthernet (LiveMigration)" -IPAddress 10.0.3.21 -PrefixLength 24 #Configure Jumbo frames in the vNICs Get-NetAdapterAdvancedProperty -Name "vEthernet (LiveMigration)", "vEthernet (Management)", "vEthernet (Cluster)" -DisplayName "Jumbo Packet" | Set-NetAdapterAdvancedProperty -RegistryValue "9014" #Configure bandwidth allocation settings for the virtual network adapters Set-VMNetworkAdapter –ManagementOS –Name "Management" –MinimumBandwidthWeight 10 Set-VMNetworkAdapter -ManagementOS -Name "Cluster" -MinimumBandwidthWeight 30 Set-VMNetworkAdapter -ManagementOS -Name "LiveMigration" -MinimumBandwidthWeight 60 #Enable VMQ on the network adapters of the Hyper-V hosts, check with your hardware vendor to ensure that activating VMQ is supported and will not be causing any performance or operational issues. Get-NetAdapterVMQ -IncludeHidden Enable-NetAdapterVmq -Name [NIC1, NIC2, NIC3, NIC4, NIC5, NIC6, NIC7, NIC8]

#Other tasks to be performed are the following. #JOIN EACH HYPER-V SERVER TO AN AD DOMAIN #ACTIVATE WINDOWS SERVER LICENSING #RUN WSFC CLUSTER VALIDATION WIZARD FULL TESTS Test-Cluster –Node $ListOfServers –Include "Inventory", "Network", "System Configuration" #Create the Hyper-V cluster objects by either using the Failover Cluster Manager mmc console or Powershell [CMDLETS COMING SOON] # After all configuration steps are complete, run the following validation tasks # Check cluster logs and Windows event logs # Create a test VM via Powershell and test live migration for networking and storage failover operations [CMDLETS COMING SOON]

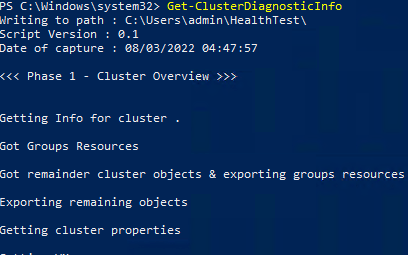

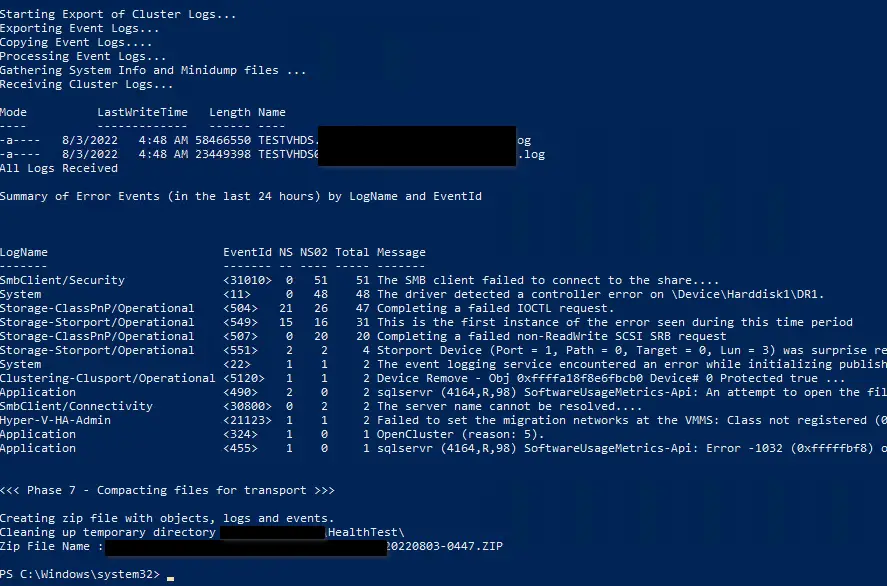

At the very end of the Hyper-V WFC cluster deployment, validate the cluster health by running the following PS cmdlet. This will gather all WFC diagnostic information combined with operating system information and will provide a single .zip file as output for further investigation. Review the zip file contents and the PS cmdlet interactive output and ensure that there are no issues with your WFC.

Powershell script download #

Based on the above cmdlets and analysis, a Powershell script will soon be made available which will take the Hyper-V hosts as input parameters and configure all Hyper-V failover cluster objects as per the input provided by the end user - Coming soon.